A controversial bill addressing AI-altered intimate images has reached its final reading in the Colorado Senate, stirring debate over privacy, consent, and emotional trauma in the digital age.

If passed, Senate Bill 25-288 will give people affected by explicit digital depictions—particularly those generated or altered by AI—the power to pursue both civil and criminal actions against the perpetrators. Supporters say the bill is overdue. Critics worry it may raise new legal challenges.

What the Bill Actually Does

At the heart of SB25-288 is a growing problem: intimate photos or videos created without someone’s consent using artificial intelligence or other image manipulation software. Deepfake porn, once the stuff of obscure tech forums, is now widespread—and deeply damaging.

The bill creates a clear legal pathway for affected individuals to take action if:

-

They are identifiable in the image

-

They did not give consent

-

They suffer emotional distress

-

Or the person who posted the image acted recklessly

Even if the photo or video was AI-generated and never actually happened, the legal protections still apply if someone reasonably believes the image is real.

Why This Matters Now

This isn’t just theoretical. Cases have exploded across the U.S., especially among teenagers and young adults. AI tools like Midjourney and Stable Diffusion, which were once used for art and storytelling, have been repurposed by some to create fake porn.

In a span of just six months, the National Center for Missing & Exploited Children says reports of non-consensual AI-generated imagery jumped by 61%.

In Colorado, a high school student was recently targeted when an explicit AI-altered image of her circulated via Snapchat. Though the photo wasn’t real, the humiliation was. Her parents, speaking anonymously to local media, said she stopped going to school and is now in therapy.

One sentence: Law enforcement had no clear way to prosecute the person who shared the image.

Representative Matt Soper, a Republican from Mesa and Delta County, co-sponsored the bill. He told reporters, “Technology’s moving fast, and our laws can’t lag behind. People deserve a way to fight back when their dignity is destroyed by something fake.”

What Makes This Bill Different

There are already laws on the books in Colorado against revenge porn and posting private images, but SB25-288 goes further.

It changes three key areas:

-

Updates the definition of “sexually exploitative material” in cases involving children

-

Strengthens criminal penalties for distributing private images for harassment

-

Expands civil liability even in AI-generated cases

Here’s a quick breakdown of what that means for both victims and offenders:

| Provision | Current Law | SB25-288 Changes |

|---|---|---|

| Consent Required | Yes | Yes |

| AI-generated Images | Not Clearly Covered | Explicitly Included |

| Civil Action | Limited | Broadened to include emotional distress |

| Child Exploitation Material | Narrowly defined | Now includes AI images |

| Intent Requirement | Must prove intent | Recklessness may suffice |

Critics warn this opens the door to false accusations or misused lawsuits. Civil liberties groups are watching closely.

The Human Toll Behind the Headlines

It’s easy to get lost in the legal language. But behind every AI-generated image, there’s often a very real person dealing with anxiety, humiliation, or worse.

One woman in Denver, who asked not to be named, discovered a deepfake video of herself online. It had been circulating on adult sites for over a year before she even knew it existed.

“Every time I Googled my name, it popped up,” she said. “I couldn’t prove who made it, I couldn’t get it taken down, and the police didn’t even know what to charge someone with.”

She says SB25-288 gave her some hope.

Even one sentence helps here: “It’s not just about justice—it’s about healing,” she told us.

Soper and co-sponsors say the civil claims portion of the bill was designed specifically for cases like hers—where proving criminal intent may be tough, but the damage is undeniable.

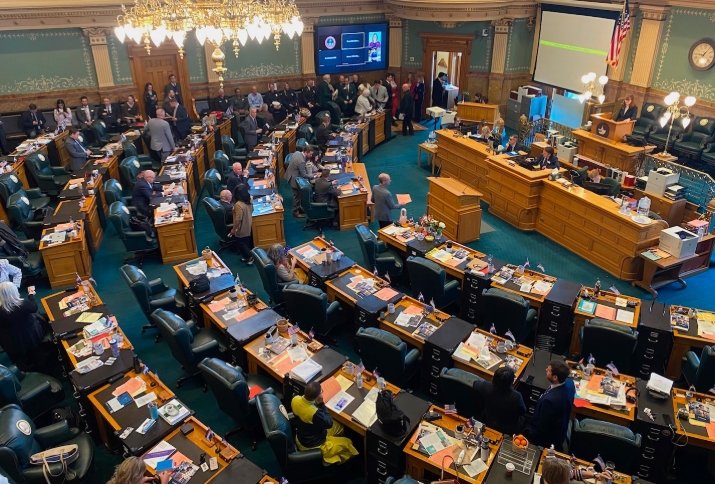

Lawmakers Caught Between Tech and Ethics

Colorado isn’t the only state wrestling with this. At least 14 other states have introduced similar legislation in 2024, though the scope and definitions vary widely. Some focus only on minors. Others make deepfake porn a felony across the board.

In Colorado’s case, SB25-288 is seen as a compromise between strong protections and enforceable standards. But getting that balance hasn’t been easy.

Opponents in the Senate Judiciary Committee voiced concerns about:

-

Free speech issues

-

Unintended consequences for meme culture

-

Enforcement challenges

Still, the bill advanced.

Senator Janet Buckley (D-Boulder) said during debate, “We’re not punishing jokes or satire. We’re going after images meant to humiliate, harass, or harm people in real life.”

What Happens If It Passes

The bill’s final reading could come within days. If passed, it heads to Governor Jared Polis’s desk.

Supporters expect quick approval. Polis has previously signaled interest in digital privacy and was an early backer of Colorado’s 2021 data protection act.

If signed into law, SB25-288 would take effect July 1, 2025.

But will it make a difference?

Digital rights lawyers say enforcement will still be tricky. Tracking down anonymous offenders, proving emotional harm, or linking someone to an AI-generated image takes serious resources.

Still, it’s a start.

And for people whose lives have been derailed by something that never even happened, it’s something more than they’ve had before.